Accelerate Success with AI-Powered Test Automation – Smarter, Faster, Flawless

Start free trialCloud migration has become a central part of enterprise technology strategy. For engineering leaders, cloud platforms promise faster release cycles, scalable infrastructure, and lower operational overhead for managing underlying systems.

Industry data reflects the scale of this shift. According to Grand View Research, the global cloud computing market is projected to grow from approximately $943 billion in 2025 to more than $1.18 trillion in 2026, highlighting how heavily enterprises are investing in cloud platforms.

For many organizations, cloud migration begins as an infrastructure decision. However, once applications begin operating in cloud environments, engineering teams often discover that validating system behavior becomes more challenging.

Service interactions heavily rely on APIs, infrastructure scales dynamically, and deployments occur more frequently. As a result, cloud migration testing, cloud scalability testing, and cloud application performance testing become critical to ensure that applications remain reliable, performant, and resilient as systems scale.

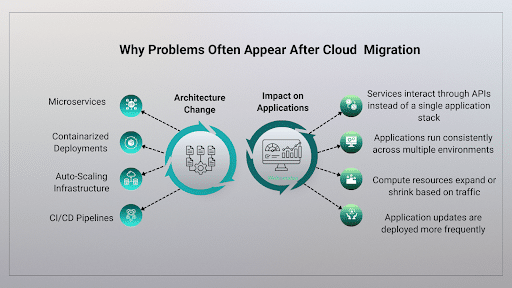

Why Problems Often Appear After Cloud Migration

Cloud environments change how applications operate. After migration, applications often run across multiple components instead of a single system.

Many organizations are already facing this complexity. The State of the Cloud Report 2025 found that 84% of organizations say managing cloud environments remains a major challenge.

Hence, for engineering leaders, the challenge is no longer just deploying applications; it is ensuring that systems behave predictably as traffic, services, and dependencies increase.

How Workflow Failures Occur in Distributed Cloud Systems

Failures in distributed systems do not always appear where teams expect them. An application may deploy successfully, and monitoring dashboards may show normal activity. Still, transactions begin to fail because something in the workflow between systems stops responding. This type of disruption often occurs in platforms where multiple services communicate through APIs.

A good example of such a case is the tax filing systems. During peak filing periods, electronic returns occasionally fail to process due to technical issues or system constraints. The IRS publishes a list of known e-file issues each tax season to help software providers identify and resolve these problems.

In cases like these, the application itself may still be working fine, but the disruption happens in the integration layer between systems. In distributed architectures, even a small API failure can interrupt an entire workflow. It is similar to a paper jam in a digital process; the system appears operational, but one blocked step prevents the process from moving forward.

This is why cloud migration testing must focus on how services interact under load, not just whether individual components function correctly.

Why APIs and Data Pipelines Must Be Tested After Cloud Migration

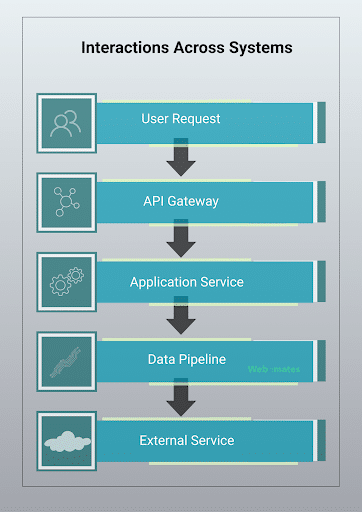

Modern cloud applications often rely on multiple services working together to complete a single user request.

Each step performs a different function in handling the request. The API gateway routes incoming traffic, services execute application logic, data pipelines process information, and external services return additional data.

Because these components operate as part of a connected chain, a disruption in one step can quickly affect downstream services.

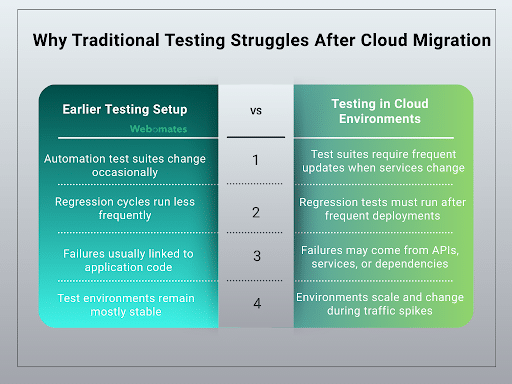

Why Traditional Testing Struggles After Cloud Migration

Traditional testing approaches were designed for applications that operated within relatively stable environments and had fewer external dependencies. As systems evolve and releases become more frequent, these test suites often require constant updates.

In many organizations, automation test suites are:

- Manually written and maintained

- Closely tied to specific application workflows

- Difficult to update when services or interfaces change

Maintaining these tests becomes increasingly difficult as applications change more frequently.

The testing workload also changes after cloud migration.

This shift requires testing strategies that can adapt to systems that evolve quickly and operate across multiple services.

How Webomates Helps Teams Validate Cloud Applications

Addressing the testing challenges of distributed cloud systems requires tools that can operate at the same pace as modern application releases.

Webomates, a cloud-based Testing-as-a-Service (TaaS) platform powered by patented AI-driven testing tools, combines intelligent test generation, automated execution, and continuous quality insights to help teams test applications operating in complex cloud environments.

Instead of relying on manually maintained automation frameworks, the platform continuously generates and executes test cases as applications evolve. This approach helps teams validate critical aspects of cloud systems more efficiently.

AI-driven test creation and maintenance: Webomates can automatically generate large sets of relevant test cases based on application behavior. In many cases, the platform can create thousands of test cases within a few weeks, allowing teams to rapidly establish meaningful regression coverage for new releases.

Self-healing automation: Changes in application interfaces often cause automated tests to fail even when the application is functioning correctly. Webomates’ self-healing automation adjusts tests automatically when interfaces change, reducing false failures and minimizing maintenance overhead.

Rapid regression execution: Modern cloud systems require frequent regression testing after deployments. Webomates can enable teams to complete full-feature regression validation in about 24 hours, with smaller module-level validation often completing in as little as eight hours, allowing teams to identify issues quickly after new releases.

Continuous testing insights: Webomates also provides visibility into testing results across the CI/CD pipeline, helping teams understand how application changes affect overall system reliability.

By combining AI-driven test generation, scalable regression execution, and continuous testing insights, Webomates helps organizations validate API interactions, service dependencies, and application performance across complex cloud environments.

Conclusion – Making Cloud Migration Deliver Business Value

Moving workloads to the cloud is only the beginning. Applications must continue to perform reliably as traffic grows and services interact across distributed systems.

One question teams should ask is simple: when demand increases, do your tests verify how the system behaves under those conditions?

As AWS CTO Werner Vogels famously observed, “Everything fails, all the time.”

The insight behind this statement is that modern distributed systems must be designed and tested to remain reliable even when components fail.

Systems that scale successfully are the ones built with this mindset, validated to remain stable even as architectures grow more complex.

Business value ultimately depends on that stability. Testing strategies, therefore, need to keep pace with frequent releases so teams can deliver updates quickly while maintaining system reliability.

Webomates helps organizations validate system behavior continuously, giving engineering teams greater confidence as their cloud environments grow.

Moving to the cloud is only the first step.

Ready to validate your cloud applications with greater confidence?

Webo.AI, a Test Automation platform powered by Webomates, helps engineering teams test cloud systems faster by automatically generating test cases, validating API interactions, and identifying performance risks across distributed environments.

Explore Webo.AI or schedule a demo to see how intelligent cloud testing can support your next release.

Is Your Cloud Migration Really Delivering ROI?

Uncover performance gaps, validate system behavior, and ensure your cloud investment actually delivers measurable results.

FAQs

1. What is the difference between traditional testing and automated cloud testing?

Traditional testing often relies on manually written test suites designed for stable environments. Automated cloud testing uses intelligent automation to generate and execute tests continuously, making it easier to validate systems that change frequently and run across distributed services.

2. How can automated cloud testing improve release velocity?

Automated cloud testing allows engineering teams to run regression tests quickly after deployments. This helps detect failures early, maintain system reliability, and support faster release cycles without increasing manual testing effort.

3. How does Webomates support cloud migration testing?

Webomates is a cloud-based Testing-as-a-Service platform that uses AI-driven automation to generate and execute tests continuously. It helps organizations validate application performance, API interactions, and service dependencies across complex cloud environments.

4. Why do applications sometimes fail after cloud migration?

Applications often fail after migration because cloud systems introduce new dependencies such as microservices, APIs, and data pipelines. These interactions increase complexity, and failures may occur in integration layers even when individual components appear to be working normally.

Aseem, Founder & CEO of Webomates, created Webomates CQ, an AI-driven testing platform that cuts testing time by 10x with AiGenerate , and accelerates test maintenance by 10x using AiHealing, with guaranteed 24-hour execution. A multi-technical Emmy award winner with AI automation patents, he writes about AI-first testing and faster, simpler software delivery.

Tags: AI Testing, API Testing, Cloud migration, Cloud testing, DevOps Testing, distributed systems, Performance Testing, Scalability testing, Test Automation

Leave a Reply