Accelerate Success with AI-Powered Test Automation – Smarter, Faster, Flawless

Start free trialThe conversation around AI-powered QA automation has accelerated dramatically over the last two years. Nearly every enterprise today is exploring how AI can improve software testing, reduce operational overhead, and accelerate release cycles. On paper, the promise is compelling: faster automation, broader test coverage, reduced manual effort, and increased engineering productivity.

And to be fair, the initial results often look impressive.

Teams adopt AI chatbots for testing workflows and quickly begin seeing improvements in repetitive execution tasks. Automation scripts are generated faster, responses become more immediate, and QA teams spend less time on routine activities. Leadership teams naturally view this as progress toward a more scalable and efficient testing organization.

But here’s the standard most teams miss: human + AI systems can “achieve performance that neither can attain individually” (arXiv).

Applications evolve continuously. Product architectures become increasingly interconnected, release cycles become shorter, and regression testing grows exponentially more difficult to maintain. As that complexity increases, many AI chatbot-driven QA systems begin struggling to keep pace.

The problem is not with AI itself. The problem is that most AI chatbots were designed for isolated interactions, while enterprise QA operates as a continuous system built on historical context, workflow memory, and evolving dependencies.

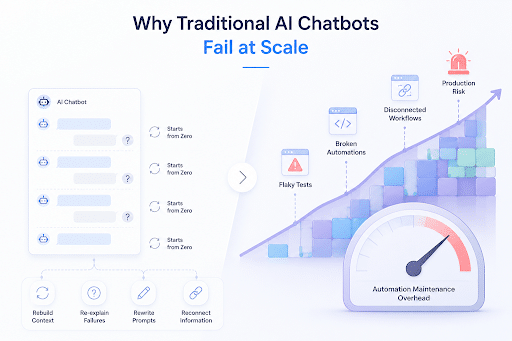

Why Traditional AI Chatbots Fail at Scale

In enterprise environments, testing is not simply about executing scripts. It is about understanding how changes across systems, workflows, and releases impact business stability over time. What failed in the previous sprint matters in the next deployment. A flaky test today may become a production outage tomorrow. A seemingly minor UI update can disrupt entire automation pipelines across multiple teams.

Yet most AI chatbot systems approach testing without continuity. Every interaction effectively begins from zero. Teams repeatedly rebuild context, re-explain failures, rewrite prompts, and manually reconnect information that the system itself does not retain. Over time, the efficiency gains organizations initially experienced begin eroding under the weight of growing automation maintenance overhead.

This is where many organizations begin realizing that AI-driven testing requires more than conversational automation. It requires systems capable of understanding workflows, retaining operational intelligence, and continuously adapting as software ecosystems evolve.

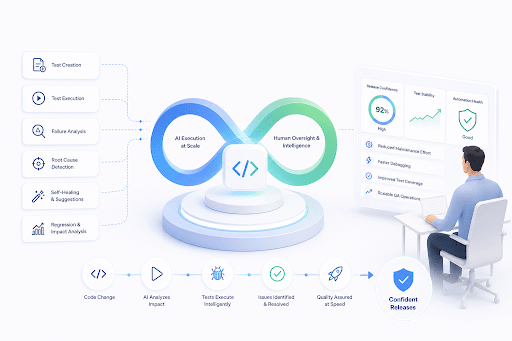

Why Human-in-the-Loop QA Is Becoming Critical

Research around collaborative AI systems consistently shows that the strongest outcomes emerge when humans and AI work together rather than independently. AI systems excel at scale, execution speed, pattern recognition, and operational consistency. Human teams excel at strategic reasoning, contextual understanding, business prioritization, and risk evaluation.

The organizations seeing meaningful success with AI-powered software testing are the ones combining these strengths instead of attempting to replace one with the other.

That balance becomes especially important in enterprise QA environments where trust, accountability, and release confidence directly impact business outcomes. If AI attempts to make every testing decision autonomously, teams lose trust in the system. If humans continue managing all repetitive execution manually, organizations lose scalability and speed.

Human-in-the-Loop QA solves this problem by allowing AI to manage operational complexity while human teams retain strategic oversight and decision-making authority. That combination creates a far more resilient and scalable approach to intelligent test automation.

There’s a line from Entropy that explains this perfectly: “Winning systems are not the best AI or best humans- but the best collaboration between them” (MDPI Entropy).

Why AiScriptBuddy Was Built Differently

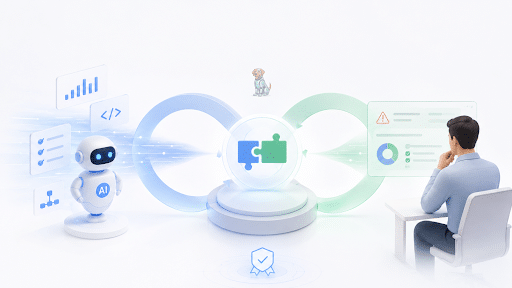

At Webomates, we recognized early that enterprise QA teams did not simply need another AI chatbot layered onto existing workflows. They needed a persistent AI-powered QA automation platform capable of participating intelligently inside real engineering operations.

That realization led to the development of AiScriptBuddy.

Unlike traditional chatbot-based automation tools, AiScriptBuddy was designed as a continuous AI-driven QA ecosystem that retains workflow context and actively supports testing operations over time. Its built-in AIBuddy functions less like a transactional assistant and more like an operational testing partner that evolves alongside the product and the engineering organization.

This changes the nature of QA automation entirely.

When a test fails, the system does not simply surface an error message and wait for human intervention. Instead, AIBuddy helps teams understand where the failure originated, what likely changed in the workflow, whether flaky tests or locator failures are involved, and how similar issues may have been resolved previously. By continuously retaining testing intelligence, the system reduces the repetitive operational burden that often consumes enterprise QA teams.

How AiScriptBuddy Helps Enterprises Scale QA Operations

One of the largest hidden costs in enterprise QA automation is not initial script creation, but long-term maintenance. As products evolve, automation debt grows rapidly. Test suites become fragile, debugging cycles increase, and teams spend more time maintaining automation than accelerating delivery.

AiScriptBuddy was built specifically to address that challenge.

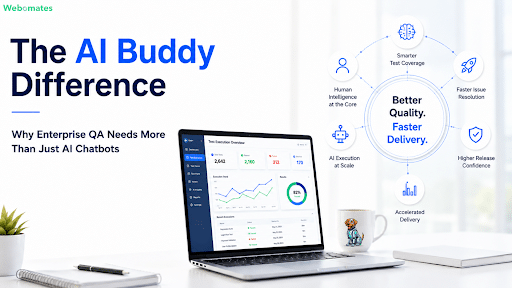

By combining Human-in-the-Loop intelligence with persistent AI-assisted workflows, the platform helps organizations reduce automation maintenance effort, improve regression testing efficiency, accelerate debugging, and scale continuous testing operations without proportionally increasing QA overhead.

For enterprises managing large-scale CI/CD pipelines and rapid release cycles, this creates measurable operational value. Teams can improve release confidence, reduce test instability, accelerate delivery timelines, and increase engineering productivity without sacrificing quality assurance standards.

More importantly, organizations maintain human oversight while still benefiting from AI-driven execution scale.

That is where the real business value of AI-powered QA automation begins to emerge.

The Future of AI in Software Testing

I do not believe the future of enterprise software testing will be fully autonomous AI. At the same time, I also do not believe traditional manual testing models alone can support the speed and complexity of modern software delivery.

The future belongs to collaborative intelligence systems where AI handles operational scale while humans retain strategic control, contextual understanding, and decision-making authority.

The organizations that succeed in the next phase of software delivery will not simply automate more tests. They will build adaptive quality engineering systems capable of learning, evolving, and scaling alongside their products and business priorities.That is precisely the direction the industry is moving toward. And that is exactly the future AiScriptBuddy was designed for. Try AiScriptBuddy.ai now to see this human-AI collaboration in real time. It has twice the AI. It’s in the name.

Enterprise QA Needs More Than AI Script Generation

Generate, maintain, and scale enterprise-grade test automation with AI that adapts to UI changes, reduces flaky tests, and keeps QA moving faster.

✔ No credit card required | ✔ Setup in minutes

Aseem, Founder & CEO of Webomates, created Webomates CQ, an AI-driven testing platform that cuts testing time by 10x with AiGenerate , and accelerates test maintenance by 10x using AiHealing, with guaranteed 24-hour execution. A multi-technical Emmy award winner with AI automation patents, he writes about AI-first testing and faster, simpler software delivery.

Tags: AI Chatbots, AI QA, AI Testing, Artificial Intelligence, Automation MAintenance, Enterprise QA, Human in the Loop AI, Intelligent Test Automation, QA Automation, Software Testing

Leave a Reply