Accelerate Success with AI-Powered Test Automation – Smarter, Faster, Flawless

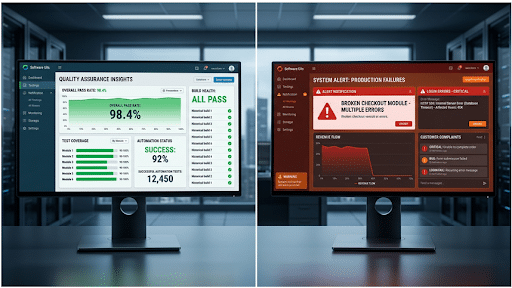

Start free trialQA budgets continue to grow, yet many organizations still face production defects, release delays, and customer impact, raising questions about QA cost vs quality and overall software testing budget.

One of the best examples is the Knight Capital Group, which lost $440 million in 45 minutes after faulty software was released to production. The cost of that single failure likely exceeded what had been spent on testing over several years.

In many enterprises, as much as 80% of QA spend can become trapped in low-yield work. As a result, many QA budgets are built to show effort, not improve outcomes. This is why spending rises while quality does not, exposing weak testing spend effectiveness.

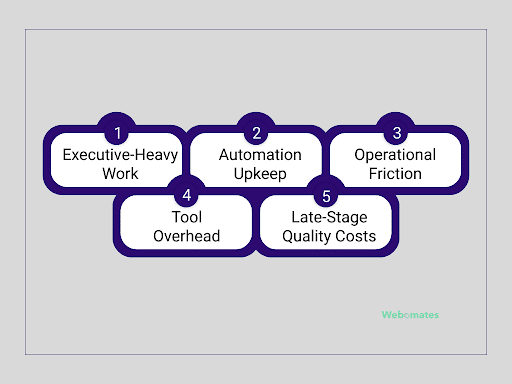

What the 80% Actually Represents

In many organizations, the majority of QA spend goes toward activities that keep delivery moving, but do not materially improve release confidence. Typical budget split includes:

Executive-Heavy Work

Testing costs often rise simply because release activity increases. So, more changes usually mean more regression cycles, more reruns, and more teams validating the same core functionality.

According to McKinsey, AI-enabled software organizations improved software quality by 31% to 45%, showing how much performance can be unlocked when repetitive work is reduced. The issue is not how often testing happens. It is that many businesses still treat every new release as a reason to add more cost, which is an inefficient way to grow.

Automation Upkeep

Automation is meant to reduce cost, yet in many organizations, a growing share of QA spend goes into maintaining the automation itself. As applications change, scripts break, locators fail, and workflows need updates, turning automation into an ongoing support cost.

Gartner predicts that by 2028, 90% of enterprise software engineers will use AI code assistants, up from less than 14% in early 2024. Automation only works financially when maintenance costs stay lower than the manual effort it replaces.

Operational Friction

When organizations use AI in Quality Engineering, they report an average 19% productivity boost, according to Capgemini’s World Quality Report. This highlights how much efficiency is often lost in manual handoffs, approvals, and fragmented workflows.

In many enterprises, QA spend is lost in delays between teams rather than in testing itself. Environment waits, and dependency bottlenecks extend release cycles while adding little quality value. When more time is spent waiting than validating risk, cost rises without improving outcomes.

Tool Overhead

Many organizations keep adding QA tools over time, creating overlapping platforms with low utilization. License costs rise, while usage remains fragmented across teams. When multiple tools solve similar problems, budgets grow while adoption stays limited.

Late-Stage Quality Costs

Late-stage defects are expensive because they create rework, release delays, and recovery effort after deployment. A DORA research from Google shows that high-performing technology organizations achieve lower change failure rates and faster recovery times, highlighting the value of catching issues before production.

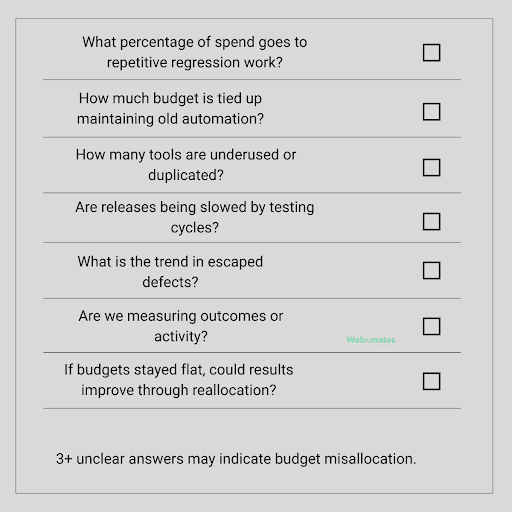

Questions Every Leader Should Ask About QA Spend

Before increasing QA budgets, leaders should first assess whether the current spend is creating measurable value or simply being absorbed by low-value work.

Where High-Performing Organizations Invest — and What It Prevents

High-performing organizations do not measure QA success by output alone. They direct budgets toward investments that improve speed, stability, and measurable returns. The difference here is not bigger budgets, but a better test budget allocation strategy.

Prevention Over Rework

Quality becomes expensive when issues are discovered during release or after deployment, when fixes are slower, broader, and more disruptive to the business. Stronger operating models prioritize earlier validation and faster feedback.

In one large North American media and telecom transformation program, Webomates detected issues in staging across UI, API, Salesforce, and integration systems before release, reducing downstream disruption and improving production quality. This reduces spend on rework and release recovery, leaving more budget available for speed and innovation.

Automation With Clear ROI

Automation often begins as a cost-saving initiative but loses value when scripts break, applications change, and maintenance efforts keep increasing. The result? Weaker ROI and automation that becomes expensive to sustain.

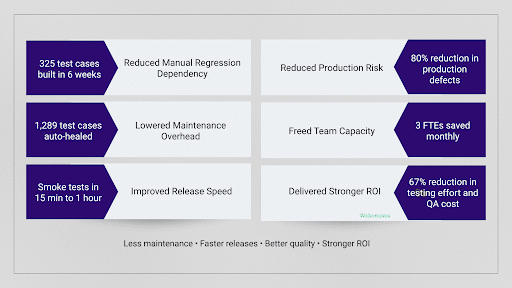

Webomates delivered a 67% reduction in testing effort and QA cost, while AiHealing® kept automation current as applications changed, reducing ongoing script maintenance.

Risk-Based Release Decisions

Many teams test low-risk and high-risk releases the same way. The result is slower approvals, overloaded regression cycles, and delayed delivery where speed matters most.

Webomates supported faster release decisions with smoke test results in 15 minutes to 1 hour, overnight regression completed by the next business morning, and prioritized defect reporting for faster triage.

Stable environments and data

Testing slows down when environments are unstable or when test data is unreliable. Teams spend time rerunning failed cycles, troubleshooting setup issues, and waiting on dependencies instead of validating release risk. Stable delivery systems are central to software quality assurance ROI.

Webomates enabled cross-environment validation, faster regression cycles, and structured defect reporting, helping reduce delays, reruns, and blocked releases across complex applications.

Delivery loses momentum when QA becomes the last approval step for every release. Development moves ahead, while approvals, testing decisions, and unresolved defects get pushed to the end of the cycle.

Webomates enabled faster quality feedback through regular test reviews, prioritized defect reporting, and accelerated validation cycles. This contributed to an 80% reduction in production defects, helping teams identify and address issues earlier in the delivery cycle.

How Webomates Helps Reallocate QA Spend

Webomates helps organizations move QA budgets away from repetitive execution and toward measurable business outcomes through a smarter QA budget allocation strategy.

- Reduced manual regression dependency by building large-scale automation quickly, including 325 automated test cases in six weeks, and later expanded to 746, plus 563 test cases for Salesforce integration coverage.

- Lowered maintenance overhead through AiHealing®, resolving false failures and automatically healing 1,289 test cases in one application stream, 383 in another, and 581 in Salesforce testing.

- Improved release speed with smoke test results in 15 minutes to 1 hour, overnight regression by 9 AM EST, and full regression within 24 hours.

- Reduced production risk through earlier defect detection, contributing to an 80% reduction in production defects.

- Freed team capacity for higher-value work by saving 3 FTEs monthly, plus 103 person-weeks and 134 person-weeks of manual effort across programs.

- Delivered stronger ROI from existing spend with a 67% reduction in testing effort and 67% reduction in QA costs, without relying on linear headcount growth.

In short, Webomates help convert QA spend from maintenance-heavy activity into faster releases, stronger quality, and more efficient use of resources.

Conclusion

The organizations gaining speed and stability are not always spending more. They are simply using QA budgets with clearer priorities and stronger discipline. When budgets remain tied up in repetitive execution, maintenance-heavy automation, and late defect discovery, your returns will remain limited.

But when you apply the same investment to intelligent automation, faster validation, and earlier defect detection, it turns quality into a business advantage. This is the core of how to optimize QA budget for better ROI. Webomates helps organizations achieve faster releases, lower operating costs, and stronger production results from the budgets they already have.

Your QA Budget Isn’t the Problem—Allocation Is

Stop overspending on maintenance and low-impact testing. Use AI-driven insights to optimize QA investments, reduce waste, and maximize testing ROI.

✔ No credit card required | ✔ Get insights in minutes

FAQs

- How can organizations reduce QA costs without losing quality?

The most effective way is to remove low-value effort, not reduce quality controls. This usually means automating repetitive regression, stabilizing environments, improving defect prevention, and focusing testing depth based on business risk. This is how leading teams can reduce QA costs without losing quality.

- How should leaders evaluate QA cost vs quality?

Leaders should evaluate QA spend through the outcomes it creates, not by the budget size alone. Instead of focusing only on how much is being spent, a better measure is what that investment is preventing or improving. Stronger indicators include escaped defects, release speed, recovery time, automation maintenance effort, and customer impact. This gives a clearer view of QA cost vs quality.

- Which QA activities usually deliver the strongest ROI?

The highest returns often come from risk-based automation, earlier defect detection, stable environments, reliable test data, and production feedback loops. These areas usually outperform spending on excess reruns or maintenance-heavy testing models, improving software quality assurance ROI.

- Why does QA budget misallocation happen even in mature enterprises?

It often happens gradually. As release volumes grow, organizations add tools, people, and testing cycles to keep delivery moving. Over time, spend increases around activity rather than outcomes. This is why many mature enterprises still struggle with QA budget misallocation despite larger investments.

Aseem, Founder & CEO of Webomates, created Webomates CQ, an AI-driven testing platform that cuts testing time by 10x with AiGenerate , and accelerates test maintenance by 10x using AiHealing, with guaranteed 24-hour execution. A multi-technical Emmy award winner with AI automation patents, he writes about AI-first testing and faster, simpler software delivery.

Tags: QA Budget, QA Optimisation, QA Strategy, Software Quality, Software Testing, Test Automation, Testing ROI

Leave a Reply