Accelerate Success with AI-Powered Test Automation – Smarter, Faster, Flawless

Start free trialBy 2026, quality engineering will no longer be defined by deep expertise in a single industry. Product ecosystems are expanding, technology stacks are converging, and AI is rapidly compressing how quickly domain knowledge can be accessed. As a result, QA excellence is shifting away from narrow specialization toward adaptability, cross-domain intelligence, and AI-augmented execution.

Traditional QA models were built around long-term immersion in one business domain. That depth created strong subject-matter experts, but it also assumed relative industry stability. That assumption no longer holds. Markets evolve faster than team structures, and AI is accelerating both product change and delivery expectations.

In this environment, QA capability is increasingly measured by how quickly teams can move between domains, recognize unfamiliar risk patterns, and apply quality strategies that hold up regardless of industry context. This is where multi-domain testing and AI upskilling converge to redefine what QA excellence truly means.

The Structural Risk of Single-Domain QA Models

Single-domain testing models introduce fragility into quality organizations. When expertise becomes tightly coupled to one industry, redeployment slows, learning curves lengthen, and quality coverage weakens whenever business priorities shift.

Industry-specific downturns make this risk visible, but the underlying issue is structural. QA teams anchored to one domain struggle to absorb new products, new verticals, and emerging regulatory or architectural demands. Over time, quality capability becomes harder to scale, not easier.

Multi-domain exposure counteracts this. Testers who operate across industries carry transferable testing heuristics, broader failure pattern recognition, and stronger contextual judgment. This enables faster onboarding, more effective risk analysis, and greater organizational resilience when portfolios evolve.

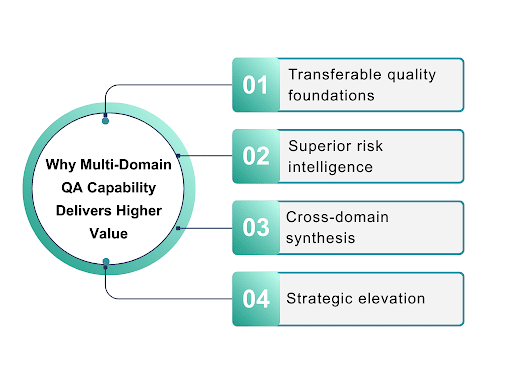

Why Multi-Domain QA Capability Delivers Higher Value

The strongest testing skills are not domain-bound. What changes is context, not the underlying discipline.

1) Transferable quality foundations

Exploratory analysis, risk-based test design, automation architecture, and data validation apply across industries, enabling QA capability to scale without being rebuilt.

2) Superior risk intelligence

FinTech sharpens sensitivity to fraud and compliance. Health systems reinforce safety and privacy awareness. Commerce platforms develop an instinct for scalability and revenue protection.

3) Cross-domain synthesis

When these perspectives converge, prioritization improves, blind spots shrink, and coverage aligns more closely with business impact.

4) Strategic elevation

Over time, QA organizations shift from execution layers to quality intelligence systems. Patterns emerge across products, systemic failure modes become visible, and QA begins shaping how risk is understood.

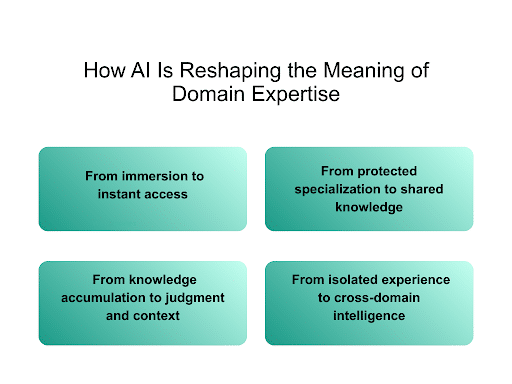

How AI Is Reshaping the Meaning of Domain Expertise

AI is compressing the time required to access and operationalize domain knowledge. Regulatory logic, workflows, and data models are increasingly available on demand. This is shifting where expertise lives and how QA value is created.

1) From immersion to instant access

Regulatory rules, business workflows, and realistic test data can now be generated, analyzed, and simulated rapidly. What once required long immersion is increasingly available on demand.

2) From protected specialization to shared knowledge

As foundational domain understanding becomes easier to obtain, it stops being a primary differentiator. Deep single-domain immersion alone no longer provides the same long-term strategic advantage.

3) From knowledge accumulation to judgment and context

AI does not compare risk across industries, intuit systemic impact, or design strategies rooted in real-world failure behavior. These remain uniquely human strengths.

4) From isolated experience to cross-domain intelligence

Multi-domain exposure strengthens contextual reasoning, pattern recognition, and risk interpretation, allowing humans to make sense of what AI produces.

Where it converges

When AI fluency is paired with cross-domain experience, QA capability accelerates. Context switching improves, automation becomes more intelligent, and test strategy shifts from reactive validation to predictive quality design.

Outcome: Domain-adaptive QA capability

QA organizations move from being domain-bound to domain-adaptive, capable of operating effectively across industries, platforms, and evolving business models.

The Roles Emerging Around Multi-Domain Thinking

As products diversify and platforms converge, the QA roles rising fastest are those responsible for strategy, not execution. Architecture, quality governance, platform quality leadership, and organizational test strategy increasingly demand a cross-domain perspective.

These roles require rapid domain absorption, reusable quality frameworks, and the ability to guide teams through unfamiliar business contexts. Decisions must hold across industries, not just within them.

Organizations building these capabilities are investing not only in skills but in institutional adaptability. Multi-domain QA functions scale more effectively, support broader portfolios, and provide leadership teams with clearer risk visibility as products evolve.

Building Multi-Domain, AI-Ready QA Capability

Multi-domain strength is not created through surface exposure. It develops through deliberate rotation across industries, reinforcement of core testing fundamentals, and systematic AI upskilling.

Strong fundamentals allow teams to transfer effectively between contexts. AI fluency enables automation acceleration, smarter coverage design, and deeper insight extraction from test execution. Together, they form the foundation of modern QA capability.

This combination allows QA organizations to operate with greater speed, stronger foresight, and higher strategic relevance, regardless of the industries they support.

What Multi-Domain and AI Capability Unlocks

By 2026, QA organizations that combine multi-domain experience with AI-enabled execution will onboard faster, detect systemic risk earlier, and sustain quality under continuous change. Automation frameworks become more resilient. Collaboration with product and engineering strengthens. Test data shifts from validation to intelligence.

“Quality moves upstream. Risk becomes visible sooner. Decision-making improves.”

In this model, QA excellence is no longer defined by what has been tested, but by how effectively organizations anticipate failure, adapt to new domains, and protect delivery outcomes as complexity increases.

The Future of QA Excellence

QA excellence in 2026 is not built on deeper specialization alone. It is built on breadth, adaptability, and AI-augmented intelligence.

Organizations that invest in multi-domain QA capability and AI upskilling build quality systems that scale with change rather than resist it. They strengthen risk awareness, increase delivery resilience, and position quality as a strategic function rather than a reactive one.

Domain expertise should strengthen QA, not constrain it. Webomates supports organizations building AI-ready QA capability by combining intelligent automation, self-healing execution, and domain-agnostic test design, helping quality teams operate effectively across products, platforms, and industries.

Ready to move from domain-bound QA to domain-adaptive quality intelligence?

Webo.AI enables enterprises to scale multi-domain testing with AI-powered automation, risk intelligence, and resilient execution frameworks built for evolving product ecosystems.

👉 Explore Webo.AI or Schedule a Demo to see how AI-ready QA scales without structural fragility.

Future-Ready QA Needs More Than One Domain

Modern applications demand broader expertise and smarter insights. Combine multi-domain testing with AI-driven intelligence to deliver quality at scale.

FAQs

1. What is multi-domain testing in QA, and why does it matter in 2026?

Multi-domain testing refers to a QA capability that spans multiple industries rather than a single business domain. In 2026, this approach matters because product ecosystems evolve faster than domain-specific teams can adapt. Cross-domain QA improves resilience, speeds up onboarding, and strengthens risk detection. It allows quality engineering teams to remain effective as business priorities shift.

2. Why are single-domain QA models becoming a risk for organizations?

Single-domain QA models limit adaptability and slow response when products or industries change. When expertise is tightly coupled to one domain, scaling quality becomes difficult during expansion or diversification. This creates structural risk, not just skill gaps. Multi-domain QA reduces dependency on static expertise and improves organizational flexibility.

3. How does AI change the meaning of domain expertise in quality engineering?

AI accelerates access to domain knowledge by generating workflows, regulations, and test data on demand. As a result, deep immersion in one industry is no longer the primary source of QA value. Human expertise shifts toward judgment, contextual reasoning, and risk interpretation across domains. AI and cross-domain experience together redefine what effective domain expertise looks like.

4. What QA skills become most valuable with multi-domain and AI adoption?

Transferable skills such as risk-based testing, exploratory analysis, automation architecture, and failure pattern recognition gain importance. These skills remain effective regardless of industry context. AI fluency further enhances their impact by improving test coverage, execution speed, and insight extraction. Together, they enable domain-adaptive QA capability.

5. How do multi-domain testing and AI upskilling improve QA excellence and business outcomes?

Multi-domain testing and AI upskilling help QA teams onboard faster, identify systemic risks earlier, and sustain quality amid continuous change. They shift QA from reactive validation to predictive quality intelligence. This improves delivery resilience, decision-making, and collaboration with product and engineering teams. In 2026, this combination defines modern QA excellence.

Aseem, Founder & CEO of Webomates, created Webomates CQ, an AI-driven testing platform that cuts testing time by 10x with AiGenerate , and accelerates test maintenance by 10x using AiHealing, with guaranteed 24-hour execution. A multi-technical Emmy award winner with AI automation patents, he writes about AI-first testing and faster, simpler software delivery.

Tags: AI Testing, AI upskilling in QA, AI-driven testing, cross-domain QA, Multi-Domain Testing, QA excellence 2026, QA Transformation, quality engineering strategy, Software Testing

Leave a Reply