Accelerate Success with AI-Powered Test Automation – Smarter, Faster, Flawless

Start free trialMost software teams today release more frequently, automate more tests, and increasingly rely on AI in their development workflows. Yet, the release confidence has not improved at the same pace. Despite more tooling and coverage, teams still debate readiness, absorb last-minute rework, and recover from issues that surface too late.

Testing is no longer only a quality concern. It has become a scaling constraint. As delivery velocity increases and AI accelerates test creation, traditional testing models create friction unless cost, headcount, or risk is allowed to rise alongside them.

This pressure is reflected across the industry. According to a report, the global software testing market is projected to grow from roughly USD 48 billion in 2025 to almost USD 94 billion by 2030. This growth is driven largely by increased adoption of automation and AI-first testing tools, as organizations are investing heavily to scale validation without slowing delivery.

Many teams now use AI-assisted tools to convert written test intent into automated execution. This has improved speed and surface-level coverage, but it has also introduced a new challenge. More tests and faster feedback do not automatically translate into clearer release decisions when human judgment, automation, and AI operate in isolation.

This shift isn’t about automation replacing testers. It’s about redefining how human judgment, automation, and AI work together. What was once viewed as “manual vs automation” testing is no longer a binary choice.

Welcome to Hybrid AI Assurance.

Manual vs Automation Testing: Why You Need Both

Many teams still treat manual and automation testing as separate choices, even though they are meant to work together. This separation creates blind spots that surface late in delivery, when fixes are expensive, and confidence is already compromised.

Manual testing brings context and judgment, while automation provides consistency across releases.

When teams rely primarily on manual testing:

- User intent, behavior, and flow issues are surfaced early

- Usability gaps and visual inconsistencies are caught before release

- Edge cases appear through exploration rather than documentation

However, manual approaches do not scale. Large regression suites and multi-environment validation become bottlenecks as release frequency increases.

When teams rely primarily on automation:

- Validation runs quickly and consistently across releases

- Technical failures are detected early and repeatedly

- Baseline confidence is maintained as systems evolve

But automation alone misses the human side of testing. Issues like spacing problems, visual misalignment, overlapping fields, and usability friction often pass automation checks unnoticed.

This is why the binary is broken: the Manual vs Automation Line Doesn’t Work Anymore. Reliable outcomes come from using both together, with clear ownership of what each is responsible for.

The implication is operational, not theoretical. How quality work is distributed across humans, automation, and AI directly affects release predictability, cost of delay, and team effectiveness.

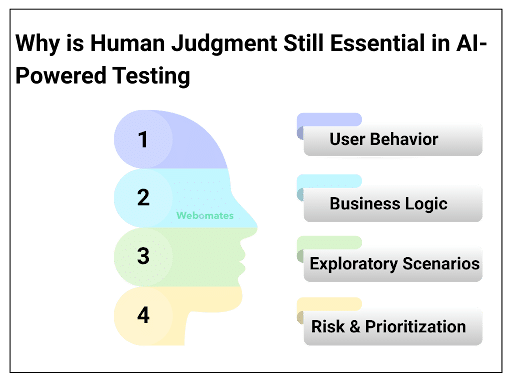

Why is Human Judgment Still Essential in AI-Powered Testing

AI can generate texts, expand coverage, and execute validations at scale. What it cannot do is decide whether a system is behaving as intended in production. That distinction becomes critical as teams rely more heavily on AI-generated signals that increasingly influence whether a release proceeds or is held back.

Human judgment plays a decisive role in several areas where AI output alone is insufficient, including:

- User Behavior: AI checks what should happen, but humans observe what actually happens. Users scroll differently on mobile, rely on gestures, and use the interface in ways that expose issues that AI may not naturally detect.

- Business Logic: Some rules are not documented clearly or change frequently. Humans can judge if the output aligns with how the business really works, especially in cases where AI may accept technically correct but contextually wrong results.

- Exploratory Scenarios: AI follows what is written, while humans explore beyond the script. This helps you find gaps, assumptions, and alternate paths that AI would never attempt unless explicitly instructed.

- Risk and Prioritization: AI cannot judge which areas matter most to the business. Humans decide which flows need deeper validation, where AI-generated tests are enough, and where manual observation is still important.

Human judgment remains critical because product quality is not only about functional correctness. It is also about intent, clarity, and usability. As AI accelerates test creation, human oversight becomes more important, not less, in deciding whether the system is truly ready to ship.

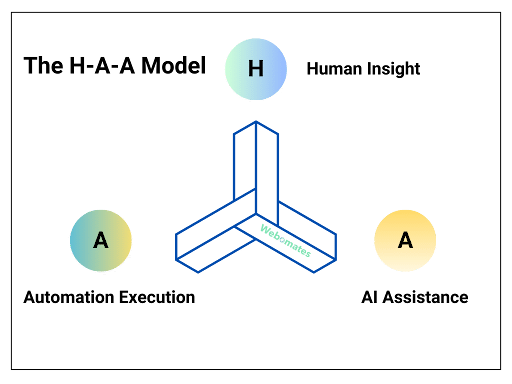

Humans + Automation + AI: The perfect approach (The H-A-A Model)

The H-A-A Model brings together three strengths that testing teams already rely on. Instead of treating human insight, automation execution, and AI assistance as separate steps, the model defines how they work together as a single system that supports reliable release decisions.

H: Human Insight

Humans bring the context. They notice the subtle issues that appear only during real interaction, such as unexpected pop-ups, irrelevant tooltips, inconsistent labels, and missing confirmations that are easy to overlook in scripted execution. More importantly, they decide whether observed behavior aligns with intent, usability expectations, and business priorities. This layer owns judgment, risk assessment, and release readiness.

A: Automation Execution

Automation provides consistency and scale. It runs regression suites across browsers, validates stable API responses, and exposes issues that arise only under repeated execution, such as timing problems and performance degradation. This layer owns repeatability, baseline confidence, and early detection as systems change.

A: AI Assistance

AI accelerates the path from intent to execution. It converts plain English test cases into automation scripts, expands a simple input validation into multiple variations, and identifies missing checks like absent error messages or boundary value conditions.

It also updates locator references when UI elements shift, reducing the maintenance effort that would otherwise slow delivery. Its primary contribution is faster test creation, broader coverage, and lower maintenance overhead as systems evolve.

Teams adopting hybrid AI-assisted testing models typically see the impact not as dramatic cost cuts, but as smoother releases, faster stabilization after UI changes, and lower cost of delay. The value shows up in predictability and confidence, rather than raw test counts.

What are the Challenges of Using AI in Testing

AI can significantly accelerate test creation and expand coverage, but its effectiveness depends on how it is governed. Without clear structure and oversight, AI introduces predictable failure patterns that affect stability, signal quality, and release confidence.

Here are some of the common areas where these issues show up:

- AI Struggles with Inconsistent or Noisy Inputs: When logs, element naming, screenshots, or test data lack consistency, AI reflects those patterns directly. The result is automation that follows unstable selectors, misaligned flows, or incomplete scenarios that would be obvious during human review.

- AI-Generated Tests Can Become Flaky Quickly: A tiny UI shift, a new class name, or a reordered element can break multiple tests overnight. This usually happens because AI based its decisions on the specific UI state it generated from, so even small changes can cause the affected steps to fail.

- AI Misses Deeper Business Rules: It can follow the visible UI, but it does not know why the rule exists. Important checks like mandatory fields, domain-specific terminology, or conditional requirements often go unnoticed unless they are explicitly defined and reviewed by humans.

- AI Rarely Explores Conditional or High-Risk Paths by Default: If a workflow contains multiple branches, AI typically selects the most common or straightforward path. It does not explore alternate paths or high-risk variations unless those flows are clearly defined in the examples or instructions it receives.

- AI Can Create a False Sense of Coverage When Overtrusted: AI can generate a large number of tests quickly, and the volume may appear to provide strong coverage. Duplicate checks, shallow validations, and unexamined risk areas can quietly reduce confidence rather than increase it.

Conclusion: How Webomates Can Act as the Bridge between Manual and Automation

As teams move toward Hybrid AI Assurance, one practical challenge becomes obvious. Knowing what needs to be validated is rarely the problem. The friction appears when test intent has to be translated into stable, maintainable automation. This gap sits between manual thinking and automated execution, and it slows the team down even when the test idea is straightforward.

Hybrid AI Assurance becomes effective when this middle layer is supported. Testers should be able to describe a scenario in simple language and move quickly toward automated execution. Routine fixes such as locator updates also need to be handled efficiently so that teams can stay focused on quality instead of maintenance.

AiScriptBuddy supports this transition by helping you move from written intent to automated execution without extra technical effort. It reduces the gap that usually appears between planning a scenario and turning it into a stable test, making manual and automation work feel like parts of the same process instead of two separate tracks, which is the core of Hybrid AI Assurance.

If teams are generating more tests but still debating release readiness, the issue is no longer coverage. It is how quality responsibility is distributed across humans, automation, and AI. Webomates helps close the intent-to-execution gap, which is the difference between faster testing and better release decisions.

Ready to move beyond the manual vs automation debate?

Explore Webo.Ai to see how Hybrid AI Assurance works in practice, or schedule a demo to understand how it can fit into your existing testing process.

Stop Choosing Between Manual, Automation, and AI

The future of QA isn’t one approach—it’s the right combination. Blend AI speed, automation scale, and human intelligence to deliver faster, smarter, and more reliable releases.

FAQs

1. Is Hybrid AI Assurance just another name for AI-driven test automation?

No, it’s not simply another name. AI-driven automation is mainly about speed and coverage, while Hybrid AI Assurance is about whether teams feel confident enough to release.

AI can help them generate and maintain tests faster, but it doesn’t decide what’s risky or what can wait. That judgment still sits with people. Automation gives consistency, AI reduces effort, and human judgment is what ultimately determines if the system is ready to ship.

2. How does this approach improve release confidence?

Release confidence improves when teams understand what the test results are really telling them. Hybrid AI Assurance works because responsibilities are clearly defined. People focus on judging risk and deciding whether the system is ready to ship. Automation takes care of the repeatable checks that need to behave the same way every time. AI helps teams create and maintain tests without letting maintenance slow them down.

When everyone knows their role, fewer issues appear late in the cycle. Leaders spend less time questioning test results and more time making release decisions they can stand behind.

3. What is the first step toward adopting Hybrid AI Assurance?

The first step is closing the gap between knowing what to test and actually getting it automated. Most teams are clear on what needs validation. The friction shows up when those ideas have to be turned into stable tests and kept running as the product changes.

This is the layer where platforms like Webomates tend to help. By supporting the handoff from intent to execution, teams spend less time maintaining scripts and more time treating manual and automated testing as part of the same workflow, not two separate efforts.

4. Does adopting Hybrid AI Assurance mean teams should automate less?

No, not really. The goal isn’t to automate less, but to automate with more intent.

Automation still matters, but not every check needs to be automated, and not every passing test should carry the same weight in a release decision. Over time, the conversation shifts from how many tests were run to whether the results actually help the team decide that the release is ready.

Ruchika Gupta, COO and Co-founder of Webomates, has 20+ years of experience in product delivery and global tech operations. She has held key roles at IBM, SeaChange, IPC Systems, Birlasoft, and served as President of Fonantrix Solutions. She writes about scaling operations, building strong delivery teams, and enabling smarter testing practices.

Tags: Automation Testing, Hybrid AI Assurance, Manual Testing, Manual Testing vs Automation Testing

Leave a Reply